Programmatic SEO: How to Build, Scale, and Actually Track It

Quick takeaways

- Programmatic SEO uses head term + modifier patterns to auto-generate hundreds or thousands of targeted pages.

- The most important thing is having unique, well-structured data. If you rely only on templated content without that, your pages risk being deindexed.

- Google’s 2025/2026 core updates actively demote scaled content with no differentiation or human oversight.

- Publishing pages is step one; tracking rankings across all of them is where most pSEO programmes quietly stall.

- Bulk-capable rank tracking lets you see which templates are working, and which pages to prune before they drag your domain down.

Introduction

Zapier runs over 70,000 programmatic pages. A travel site built 50,000 using a similar strategy and had 98% of them deindexed within three months. Same concept. Opposite outcomes.

The difference wasn’t scale. It was the substance behind each page, and whether anyone was watching what happened after they went live.

Most programmatic SEO guides walk you through how to build the pages. Few talk honestly about when the strategy breaks down, what Google actually penalises in 2026, or how to monitor thousands of pages once they’re out in the world. This guide covers all three.

By the end, you’ll have a clear framework for building a pSEO system with real staying power, and you’ll know exactly what to measure once it’s running.

What is programmatic SEO?

Programmatic SEO is the practice of automatically generating large numbers of pages using structured data and templates, each targeting a specific long-tail keyword variation. Instead of writing individual pages by hand, you build a system that creates them for you.

The companies that made this approach famous — Zillow, TripAdvisor, Zapier — didn’t build their scale page by page. They built data infrastructure and templates, then let the system generate entity or location-specific pages at volume. Zapier’s integration pages alone drive over 610,000 organic visits per month from a subfolder of 590,000+ pages.

Head terms and modifiers: the keyword pattern

Every programmatic SEO strategy starts with a keyword pattern: a core term paired with a modifier. The core term defines the category, like “teacher salary,” while the modifier adds specificity, such as “in Canada” or “in Austin.” When you scale those combinations, you’re covering thousands of distinct queries from a single template.

These modifiers typically fall into a few buckets: location, industry, intent, product type, or use case. The key is knowing which combinations deserve their own pages and which can share a structure. Keyword clustering helps you group related terms and decide how to structure your templates before you build anything.

How pages get generated at scale

The technical setup is usually a structured data source (a spreadsheet, database, or API feed), a page template with variable fields, and a publishing pipeline connecting the two. Airtable or Google Sheets feed into a CMS or site builder like Webflow via automation tools like Whalesync or Zapier.

The template handles layout, headings, and structure. The data source fills in the unique variables, such as location, price, stat, and entity name, that differentiate each page. That differentiation is the whole game, as we’ll get to shortly.

When does programmatic SEO actually make sense?

pSEO isn’t a fit for every site or every topic. Used in the wrong context, it creates a crawl budget problem and a content quality problem simultaneously. Two questions cut through most of the noise.

Do you have structured, unique data to power the pages?

This is the single most important prerequisite. Generic information anyone can copy doesn’t constitute unique data.

What works is data only you have access to: Zillow’s proprietary valuation algorithm, Wise’s live exchange rates, or real user-generated benchmarks and reviews. The practitioners building the most defensible pSEO programmes in 2026 are those with genuine data moats. This could be proprietary outputs, live API integrations, or customer-submitted data (salary benchmarks, tool ratings, workflow reviews) turned into indexed pages.

If your data can be scraped and replicated overnight, the pages built on it probably won’t hold rankings for long.

Is there genuine search demand for the long-tail pattern?

Not all head term + modifier combinations have meaningful search volume. Before building 5,000 pages, validate that real people actually search for the pattern at scale. Long-tail keyword research tools can show you volume distributions across modifier types. Look for consistent (even low) demand across many variations, not just a handful of high-volume terms.

If the pattern has real demand, the data is unique, and the answer each page provides is genuinely different from the next one, pSEO is likely the right call. If you’re mainly trying to manufacture content volume, Google’s recent updates will find it.

What triggers Google penalties for programmatic content?

The community debate around pSEO penalties is one of the most active in SEO right now, and the evidence is clear enough to act on.

Doorway pages vs. genuinely useful pages

Google’s manual action for doorway pages targets pages created purely to rank for specific queries that then funnel users elsewhere, with no unique value per URL. The classic failure: 5,000 city pages for a plumbing service, all sharing the same 150 words with only the city name swapped.

The signal Google watches isn’t automation itself. It’s the value deficit that often comes with it. Thin content, high bounce rates, users immediately returning to search results: these are what trigger action. The automation is irrelevant; the absence of value is not.

What ‘scaled content abuse’ means after the 2025 and 2026 updates

Google’s December 2025 Core Update explicitly targeted large-scale programmatic content generated from templates, bulk prompts, or automated aggregation. It reinforced something that had been building for a while: AI-generated or automated content gets evaluated by the same criteria as anything else — depth, accuracy, originality, and evidence of real expertise.

Pages that reuse the same structure across hundreds of URLs without adding new knowledge, and content with zero human review, are now at significant demotion risk.

One practitioner on Reddit published 3,000 AI-generated pages at once and watched impressions drop to zero within days. The community consensus: pace publishing to 10–50 pages per day, make each page genuinely different, and control what’s crawlable from the start.

A domain-wide penalty is also worth understanding here. Low-quality pSEO pages don’t just underperform individually. One documented e-commerce case lost 73% of site-wide traffic after publishing 18,000 thin category pages. The damage went well beyond the pSEO subfolder.

Quality signals: what holds up vs. what gets penalised

| Signal | What holds up | What gets penalised |

|---|---|---|

| Content per page | 500+ unique words with page-specific data | Under 300 words, same text across all pages |

| Data differentiation | 30–40% unique content per URL, real variables | Only keyword name changes; the rest is identical |

| Publishing pace | 10–50 new pages/day, controlled rollout | 1,000+ pages published overnight |

| User engagement | Low bounce rate, time on page, return visits | Immediate back-to-SERP, zero return traffic |

| Human oversight | Sample review, editorial checks, pruning cycles | Fully automated, never reviewed after publish |

| Crawl control | Noindex low-confidence pages until they show signals | All pages live with no indexing strategy |

Building a programmatic SEO system: the core workflow

The technical execution varies by stack, but the underlying logic is consistent across every pSEO programme that actually holds up over time.

Step 1: identify keyword patterns with real search demand

Start with AI-assisted keyword research focused on finding repeatable patterns, not just individual terms. You need a head term that has dozens or hundreds of viable modifiers — locations, industries, product types, use cases — each with its own search demand.

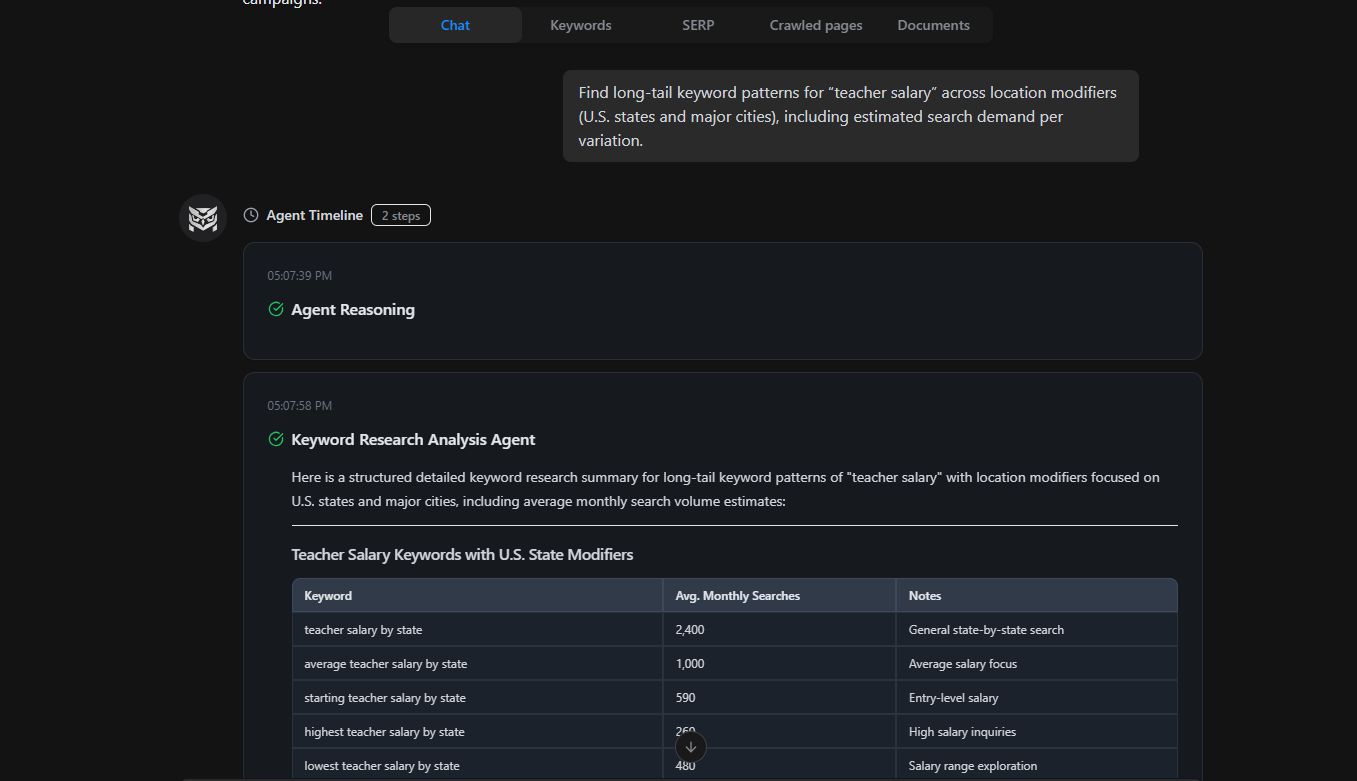

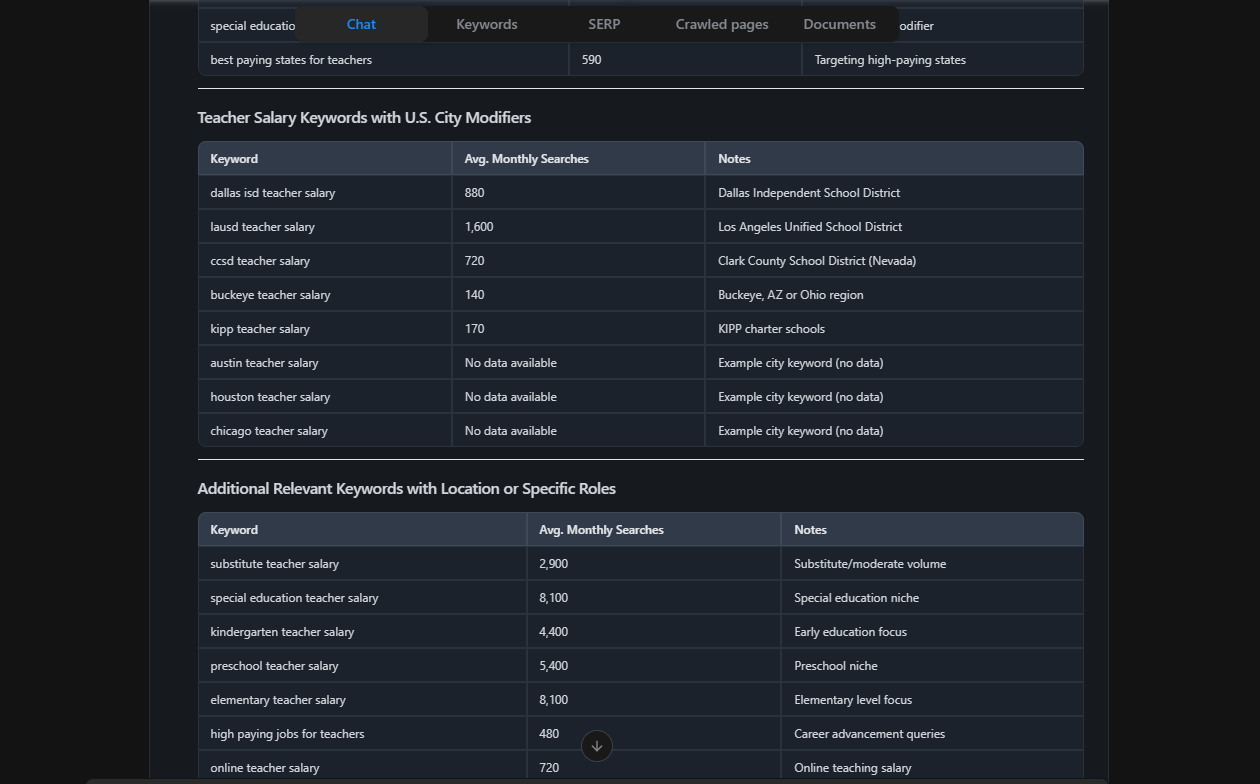

To do this efficiently, head over to NightOwl, Nightwatch’s AI SEO agent. In the chat interface, run a research prompt like:

“Find long-tail keyword patterns for [your head term] across [location/industry/use case] modifiers, including estimated search demand per variation.”

NightOwl’s Research Agent pulls keyword data with location targeting and clustering built in, so you’re not manually sorting through thousands of raw suggestions.

The agent returns grouped keyword clusters by modifier type. Identify which modifier categories have consistent demand across variations: these become your page templates.

Step 2: source or build structured, unique data

Once you have validated keyword patterns, you need data that actually makes each page different. Public datasets anyone can download aren’t enough on their own. You need a proprietary layer on top.

Options that work: internal product or usage data, live API feeds (pricing, weather, availability), customer-submitted data (reviews, salary benchmarks, ratings), or scraped and enriched datasets with a processing layer your competitors haven’t built. The key is that each row in your data source produces a meaningfully different page, not a page that differs only by the swapped keyword.

Step 3: design templates that accommodate unique data per page

Your templates need variable insertion points that pull real data. Each template should include: a data-driven headline, a unique value block populated from your data source, a FAQ section that varies by page context, and a clear answer to the specific query the page is targeting.

Before you finalise your template structure, use NightOwl’s SEO Agent to run a content gap check.

In the chat, prompt: “Analyse the top-ranking pages for [head term + modifier] and identify what content elements they include that I’m missing in my template.”

This surfaces structural gaps before you’ve built out thousands of pages, and fixing them at the template level is dramatically cheaper than retrofitting later.

Also set up advanced schema markup at the template level. Dynamic schema injection — FAQPage, HowTo, Product, or Review schema composed based on page type — improves rich snippet eligibility at scale. Pages with structured data also have a structural advantage in AI-native search, which matters more as GEO becomes a real traffic channel.

Step 4: technical setup — URL structure, internal linking, and crawl control

Clean URL structure matters at scale: use /category/modifier/ patterns that are consistent and predictable. Avoid dynamic parameters in URLs (e.g. ?city=Lagos). Static URLs are easier for Googlebot to prioritise.

Internal linking is equally important. A well-planned internal linking structure distributes authority across your programmatic pages and helps Google understand the hierarchy. Hub pages (one per modifier category) linking to their child pages is the standard pattern that works.

Crawl control is non-negotiable when you’re publishing at volume. Use XML sitemaps to signal which pages are ready to be indexed. Google’s official crawling and indexing documentation is explicit that sitemaps are how you prioritise new pages for discovery. Noindex low-confidence pages until they have real engagement signals, and pace your publishing to 10–50 pages per day rather than surfacing thousands at once.

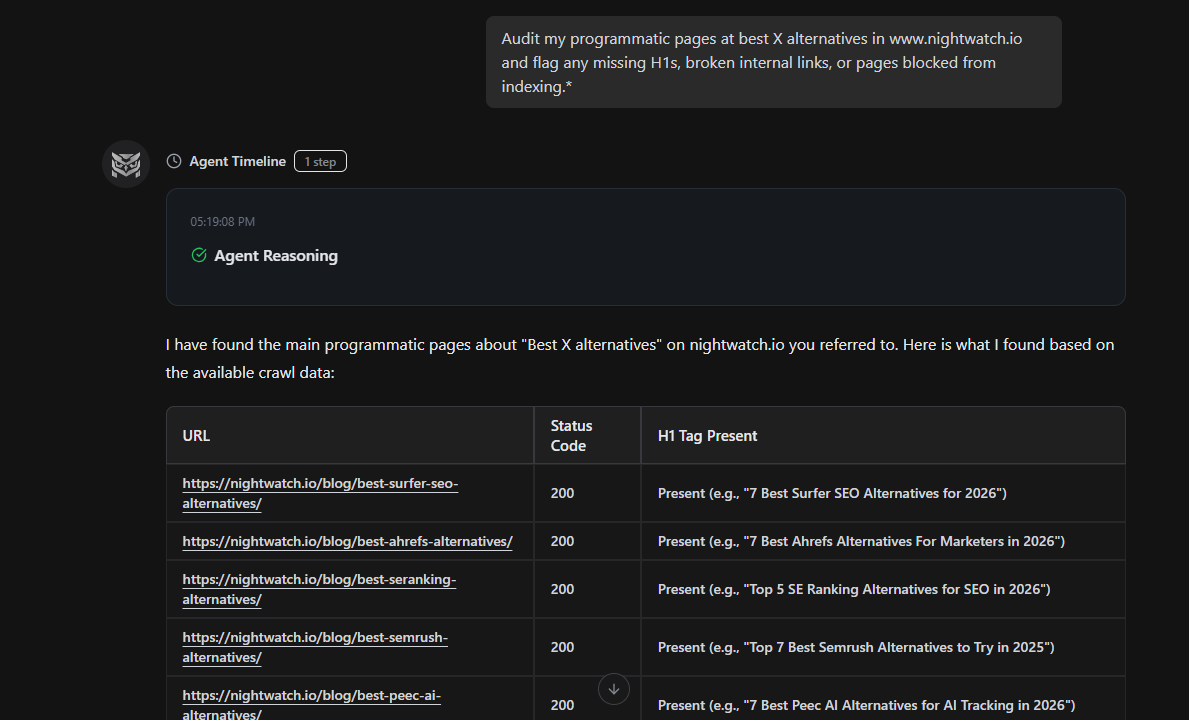

Once your first batch is live, run NightOwl’s Crawling Agent to audit the technical health of your pages before scaling further. In the chat, prompt:

“Audit my programmatic pages targeting [URL pattern] on [your site URL] and flag any missing H1s, broken internal links, or pages blocked from indexing.”

Catching structural issues at 100 pages is far less painful than at 10,000.

How do you track programmatic SEO performance at scale?

Publishing your pages is not the finish line. Most pSEO programmes fail in the six months after, when nobody is watching what’s happening across thousands of URLs.

Why standard rank tracking breaks at volume

Manually monitoring rankings for thousands of pages isn’t feasible, and checking a handful of representative keywords gives you a false picture. You need to track at the template level.

Grouping pages by modifier type so you can see whether your “[service] in [city]” template is performing differently from your “[service] for [industry]” template. Aggregate visibility at that level tells you where to invest and where to prune.

What to monitor: indexed rate, rankings, and engagement signals

Three metrics matter most in the first 90 days.

- Indexed rate: what percentage of your pages are actually in Google’s index. If it’s below 70%, your crawl control or content quality has a problem.

- Average position per template group: are your city pages outperforming your industry pages, or vice versa?

- Engagement signals via GSC: click-through rate and impressions per page. Low CTR on high-impression pages suggests your titles aren’t matching search intent.

To do this at scale without going insane, head to your Nightwatch Rank Tracker. Here’s exactly how to set it up:

- Step 1: Bulk import your keyword set. Go to Rank Tracker in your Nightwatch dashboard and use the CSV import to load your full programmatic keyword list. When preparing your import file, group keywords by URL pattern or template type. This makes the next step much easier.

- Step 2: Create Views by template group. Once your keywords are in, use Nightwatch’s Views to group them by modifier type such as city pages, industry pages, or comparison pages. This shifts your analysis away from individual keyword noise and toward template-level performance, with aggregated metrics like average position, visibility, and share of voice.

- Step 3: Set up daily monitoring. Enable daily rank refresh for your most important template groups. Nightwatch’s dropout protection cross-validates across data centres, which means you won’t be chasing false drops that trigger unnecessary interventions.

- Step 4: Prune underperformers monthly. Review performance at the template level. If a group consistently underperforms, whether through low rankings, poor indexation, or weak engagement, it needs a clear decision. Improve the data layer, consolidate thin pages, or noindex and phase them out. This is what separates programmatic SEO strategies that compound from those that slowly decline.

For a deeper view into your SEO reporting across programmatic pages, Nightwatch integrates with Google Analytics and Looker Studio, so you can connect ranking data to traffic and conversion data in the same dashboard.

Programmatic SEO and AI search: What’s Changing in 2026

The conversation in pSEO communities has shifted significantly in the past year. The most forward-thinking practitioners are no longer building pages only for Google crawlers. They are building pages designed to be cited by AI answer engines like ChatGPT, Perplexity, and Gemini.

That shift is being reinforced by distribution itself. Reddit now has formal data partnerships with both Google and OpenAI, and user-generated content increasingly shapes how language models construct answers. The practical implication is straightforward: pSEO pages built around structured, citable data, supported by FAQPage schema, clear entity relationships, and strong source signals, are more likely to surface in AI-generated responses rather than only traditional search results.

See the difference between traditional SEO vs AI SEO to understand how evaluation criteria are diverging.

There is also a second visibility layer most pSEO programmes still are not tracking. It is how your brand appears when AI systems answer queries your pages already rank for. If you are ranking for “[service] in [city]” queries, are those same queries triggering AI responses that mention your brand or ignoring it entirely?

To track this gap, Nightwatch’s AI Tracking monitors brand visibility across ChatGPT, Perplexity, Google AI Mode, and AI Overviews, and links AI mentions back to downstream search performance. At pSEO scale, this is the attribution layer traditional rank tracking cannot provide.

For more on where this is heading, the AI-driven content strategies guide breaks down how search intent is evolving as generative search matures.

Frequently asked questions

How long does programmatic SEO take to show results?

Indexing typically starts within two to four weeks of publishing, assuming your technical setup is clean and you’re using XML sitemaps correctly. Meaningful organic traffic usually appears between four and eight weeks after indexing. Significant ranking improvement and ROI tends to arrive in the three-to-six month range. Programmes that see results faster usually have strong domain authority and genuinely unique data from day one.

How many pages should I publish at once?

The community consensus, backed by documented penalty cases, is 10–50 pages per day for a new programme. Publishing thousands of pages overnight is one of the most reliable ways to trigger a soft penalty. Google discovers them all simultaneously, crawl demand spikes, and low engagement signals compound quickly. Start with a controlled batch of 100–200 pages, monitor indexed rate and engagement in GSC, and scale gradually once you see positive signals.

Can I use AI to generate programmatic SEO content?

Yes, but the bar has risen considerably. Google’s December 2025 Core Update was explicit: AI-generated content is evaluated on depth, accuracy, originality, and evidence of expertise, not on whether it was written by a human. Templates built from bulk prompts with no unique data and zero human review are a direct penalty target. The approach that works: AI generates the structure and variable content, a human reviews a sample of each batch, and the data layer provides the genuine differentiation that AI alone can’t manufacture.

What’s the difference between programmatic SEO and regular SEO?

Traditional SEO is manual and precise: you write individual pages targeting specific terms, with full editorial control over every word. Programmatic SEO trades that precision for scale, using systems to generate hundreds or thousands of pages targeting long-tail variations. The risk profile is also different: traditional SEO grows steadily and predictably; pSEO can produce explosive growth or catastrophic failure depending on data quality and execution. Neither approach is universally better. The right choice depends on whether your topic has the structured, scalable data that pSEO requires.

Does programmatic SEO work for B2B SaaS?

It does, and some of the strongest pSEO results in 2025 came from B2B SaaS companies. The highest-performing template types are “vs.” and alternatives pages (capturing high-intent buyers comparing solutions), “[use case] for [industry]” pages targeting specific verticals, and integration pages (if your product connects to other tools). UserPilot grew from 25,000 to 100,000 monthly visitors in ten months using “best [tool] for [use case]” templates. The caveat: B2B pSEO only works if conversion is built into the template design from the start. Traffic that doesn’t convert to trials or leads isn’t worth the infrastructure cost. See best AI SEO tools for the stack most B2B teams are using alongside pSEO programmes.

The bottom line

Programmatic SEO works when the system behind it is built on data nobody else has, templates that give each page something genuinely different to say, and a measurement layer that catches problems before they compound across thousands of URLs.

The programmes that fail aren’t usually failing because of the concept. They’re failing because they skipped the data moat, rushed the publishing pace, or stopped paying attention after launch. Google’s 2025 and 2026 updates haven’t killed pSEO. They’ve just raised the floor on what counts as useful.

If you’re running a programmatic SEO programme — or building one — start a free Nightwatch trial to track rankings across your full keyword set, segment performance by template group, and monitor your brand’s visibility in both traditional search and AI-generated answers.