How to Get Your Brand Cited in ChatGPT, Perplexity & Google AI Overviews

Quick Takeaways

- Reddit is the #1 citation source for LLMs overall; LinkedIn jumped from outside the top 20 to the most-cited domain for professional ChatGPT queries in just three months

- A large-scale analysis of 89,000 cited posts found that 11% of all AI responses cite a LinkedIn post or article

- When researchers analyzed 1,400+ ChatGPT and Perplexity citations for B2B SaaS brands, LLMs pulled from third-party listicles, Reddit threads, and review platforms. Not brand websites.

- Brand citations in AI search are measurable: AI Visibility Score, Share of Voice, average position, and sentiment are all trackable metrics

- The playbook: earn off-domain authority first, then reinforce it on-site

Introduction

You can rank #1 on Google and still be invisible in ChatGPT. That’s not a future problem. It’s happening right now for a lot of brands.

AI search is changing where discovery happens. Referral traffic from AI platforms grew 113% across 50+ clients tracked by Seer Interactive over three months. In the same period, Google referral traffic fell more than 20% for many sites. Brands that appear in AI-generated answers are capturing attention earlier in the buying process, before a user ever runs a search.

The challenge is that getting cited in ChatGPT, Perplexity, or Google AI Overviews doesn’t follow the same logic as ranking in Google. There’s no keyword density formula. There’s no backlink profile to build in the traditional sense. The signal set is different, and most brands haven’t adjusted.

This guide covers where each AI platform actually pulls its citations from, what kind of content gets referenced, and how to build a presence that shows up when AI answers questions in your space. It also covers how to track whether any of it is working.

Why brand citations in AI search are worth your attention

The traffic picture is shifting

Google referral traffic going down isn’t speculative. The data from multiple sources in early 2026 points in the same direction. At the same time, AI SEO is producing measurable referral growth for brands that are appearing in AI-generated responses.

The more useful framing isn’t “AI vs. Google.” It’s that discovery is happening in a new place, and if your brand isn’t cited there, a competitor probably is.

Citation share of voice is the new ranking signal

As a16z put it in their GEO over SEO analysis: it’s no longer just about click-through rates. It’s about reference rates. How often is your brand cited or used as a source in model-generated answers?

As Madhav Mistry put it on LinkedIn: “SEO isn’t dead. It’s just fragmented. AI isn’t just summarizing content. It’s choosing who gets cited.”

The numbers in one place

| Signal | Data point | Source |

|---|---|---|

| Reddit citations in Google AI Overviews | +450% growth from March to June 2025 | AuthorityTech |

| LinkedIn citation rate across AI responses | 11% of all AI responses cite a LinkedIn post or article | Finn Thormeier |

| ChatGPT/Perplexity LinkedIn citation rate | Up to 5x higher than prior baseline | Kim Scaravelli |

| Google referral traffic | Down more than 20% for many sites | Kim Scaravelli |

| AI referral growth (Seer Interactive, 50+ clients) | +113% over 3 months | Wil Reynolds, Seer Interactive |

Where do ChatGPT, Perplexity, and Google AI Overviews actually pull from?

The three platforms behave differently. Treating them as one channel is a mistake.

ChatGPT: LinkedIn now dominates professional queries

Between November 2025 and February 2026, LinkedIn went from outside the top 20 cited domains to the most-cited domain for professional queries on ChatGPT. That’s a rapid repositioning driven by the quality and density of professional content on the platform.

Kim Scaravelli reported on LinkedIn that ChatGPT and Perplexity are citing LinkedIn sources up to 5x more than before. For B2B brands, this is probably the highest-leverage channel to focus on right now.

Perplexity: Reddit-first, then third-party sources

Reddit is Perplexity’s top citation source. When Perplexity answers a question, it’s pulling heavily from subreddit discussions, community threads, and third-party listicles. Not brand homepages.

An analysis of 1,400+ ChatGPT and Perplexity citations for B2B SaaS brands found that LLMs consistently pull from G2, Capterra, comparison pages, and Reddit threads. Your brand’s own website rarely appears as a direct citation source. Third-party validation is what gets referenced.

As one r/GenerativeSEOstrategy thread put it: “AI systems don’t rely on a single source. They look at overall signals. Being referenced in multiple places matters just as much as what’s on your site.”

Google AI Overviews: traditional authority signals, with inconsistencies

Google AI Overviews still weighs traditional SEO authority signals more heavily than ChatGPT or Perplexity. Domain authority, backlink profiles, and structured content still matter here.

That said, quality control is uneven. Amsive’s Lily Ray has documented the spam problem in Google AI Overviews. Low-quality and AI-generated content is finding its way into citations more than Google would likely admit. For brands publishing genuinely useful, well-sourced content, that’s actually an opportunity.

For a deeper look at how AI Overviews work and what gets surfaced, Nightwatch’s Google AI Overviews guide covers the mechanics in detail.

What all three share

None of these platforms primarily cite your homepage. They cite specific pages, third-party mentions, community content, and comparison resources. The brands that appear consistently in AI answers have built a presence across multiple types of external content, not just a well-optimized website.

How to build a citation footprint

The LinkedIn long-form play

An analysis of 89,000 cited LinkedIn posts found that educational, original long-form content (500 to 2,000 words) accounts for the largest share of AI citations. Mid-length posts (50 to 299 words) also perform well. Reshares and branded announcements rarely get cited.

Finn Thormeier’s breakdown of the research highlighted a detail worth paying attention to: LinkedIn posts show high semantic similarity scores in AI responses, meaning AI often mirrors the meaning of your original post when citing it. If you publish original, well-structured analysis on LinkedIn and get cited, you have more influence over the narrative than you might expect.

The format that gets cited most often: practical knowledge-sharing, original analysis, and educational content. Not thought leadership fluff. Not reposts.

Reddit-first GEO

Since Reddit is Perplexity’s top citation source and plays a meaningful role in how other LLMs weight community signals, building presence in relevant subreddits before optimizing your own site makes strategic sense.

That doesn’t mean dropping links in every thread. It means contributing genuinely useful answers in subreddits where your audience already asks questions. The brands that get cited in AI answers are often the ones with a documented presence in communities: threads that reference them, discussions that mention them, comparisons that include them.

Third-party citation seeds

The citations analysis is clear: LLMs pull from G2, Capterra, comparison listicles, and industry press. If your brand isn’t appearing on those surfaces, you’re not in the citation pool for a large share of AI queries.

For B2B SaaS brands, the highest-ROI targets are:

- G2 and Capterra profiles with substantive, up-to-date reviews

- “Best [category] for [use case]” articles on industry publications

- Comparison and alternatives pages (ideally ones that rank independently)

- Niche industry newsletters or directories that LLMs have indexed

Entity and reputation hygiene

This one catches brands off guard. LLMs don’t just look at your best content. They blend sources. A stale company description, an outdated Wikipedia page, or a negative review from years ago can surface in AI responses and shape how your brand is presented.

Seer Interactive ran an experiment where AI surfaced a single bad review from 24 years ago in responses about their brand. Wil Reynolds wrote about the findings: off-site reputation and review hygiene now matters more than on-site optimization for controlling your AI narrative.

The practical implication: audit your third-party profiles, respond to old reviews, update your LinkedIn company page, and make sure any entity information about your brand across the web is accurate and current. This connects directly to E-E-A-T optimization and entity-based SEO, both of which influence how AI models evaluate brand authority. Nightwatch’s Citation Intelligence maps exactly which of your rankings are driving AI citations — so you can protect the pages that matter most.

Know what AI is being asked in your space

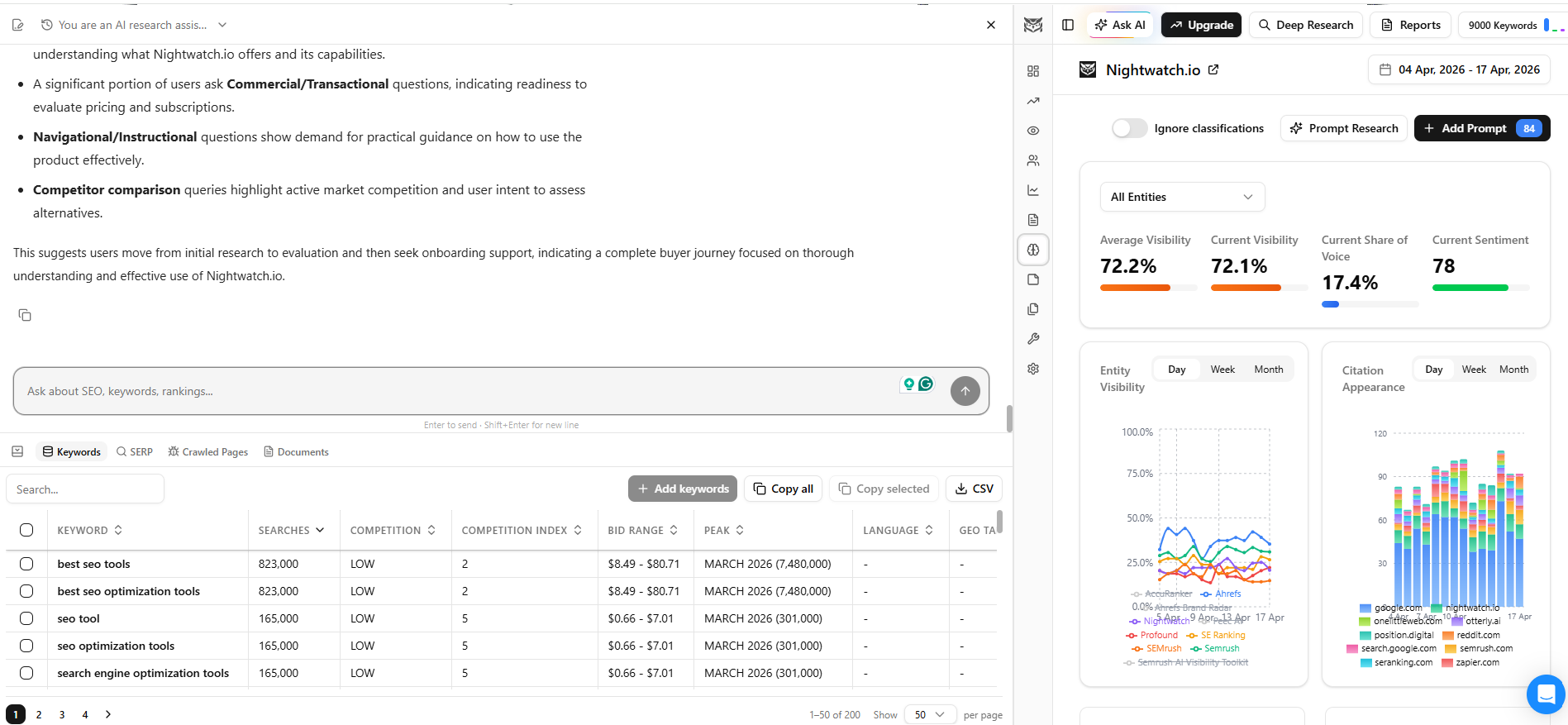

Before you decide what content to publish, it helps to know what questions AI models are actually answering in your niche. Nightwatch’s Prompt Research feature does this automatically. It generates the relevant prompts AI is being asked for any topic or industry, so you can build content around the queries that are already driving AI-generated answers.

Head over to Nightwatch’s LLM tracker and run Prompt Research for your category.

The output gives you a prioritized list of questions to target across LinkedIn, Reddit, and your own site.

What content formats get cited, and what doesn’t

Original educational content vs. branded announcements

The research on what gets cited points clearly in one direction: AI models favor original, educational content over promotional material. Product announcements, brand news, and press releases rarely appear as citations. Analysis, how-to content, and opinion pieces backed by data do.

The 89,000-post analysis found that 54 to 64% of cited posts focus on practical knowledge sharing. Virality doesn’t predict citation rate. Relevance and consistency do.

Why “best X for Y” formats get pulled into AI responses

When a user asks ChatGPT or Perplexity “what’s the best CRM for a small sales team?” The platform needs to answer a comparison question. It pulls from comparison and listicle content because that’s what directly answers the query format.

For B2B SaaS brands, the content formats that consistently win citations follow a clear pattern: competitor reviews, “best [category] for [industry]” pages, alternatives comparisons, and integration matrices. These formats exist to answer the exact question structure LLMs handle most.

If your brand isn’t appearing in those formats, either on your own site or in third-party coverage, you’re missing the citation pool for a significant share of bottom-of-funnel queries.

Semantic similarity and narrative control

One underrated aspect of LinkedIn citations is what Finn Thormeier highlighted in his analysis: the semantic similarity between cited LinkedIn posts and AI-generated responses is high. AI responses often mirror the meaning of the original post.

If you publish a well-structured LinkedIn article explaining how your category works and what separates good solutions from bad ones, and that post gets cited, the AI response will likely reflect your framing. That’s a meaningful form of influence over how your brand and category are presented. More so than a blog post that gets indexed but rarely cited.

Does publishing an llms.txt file help?

The community consensus is: probably not yet, but it’s worth doing anyway.

llms.txt is a proposed standard similar to robots.txt, intended to give LLMs guidance on how to read and cite your site. The community consensus is that it’s worth implementing — the cost is minimal — but as Digiday notes, there’s still no stable ranking system inside LLMs, and most GEO tactics remain probabilistic at best. Ship it, but don’t count it as a meaningful citation lever. The actual work is in content, platform presence, and entity hygiene.

How to track your brand citations in AI search

Why manual spot-checks don’t scale

You can open ChatGPT and type “what’s the best [your category] tool?” and see if your brand appears. That tells you almost nothing. It’s one prompt, one session, one moment in time. AI responses vary by phrasing, session context, and model version. Doing this manually across ChatGPT, Perplexity, and Google AI Overviews for dozens of relevant prompts isn’t a tracking strategy. It’s a spot-check.

Real tracking requires running consistent prompts at scale, across platforms, and measuring change over time.

What to measure

The metrics that matter for AI citation tracking are:

- AI Visibility Score: the percentage of tracked prompts where your brand appears in an AI response. This is your baseline visibility number.

- Share of Voice: how your brand’s citation rate compares to competitors across the same prompt set. You might appear in 30% of prompts, but if a competitor appears in 60%, that gap matters.

- Average position: where in an AI response your brand typically appears. A citation in the first sentence carries more weight than a mention buried in a list of ten options.

- Sentiment: whether AI references to your brand are positive, neutral, or comparative. A citation that frames you negatively is worse than no citation.

Tracking AI citations with Nightwatch

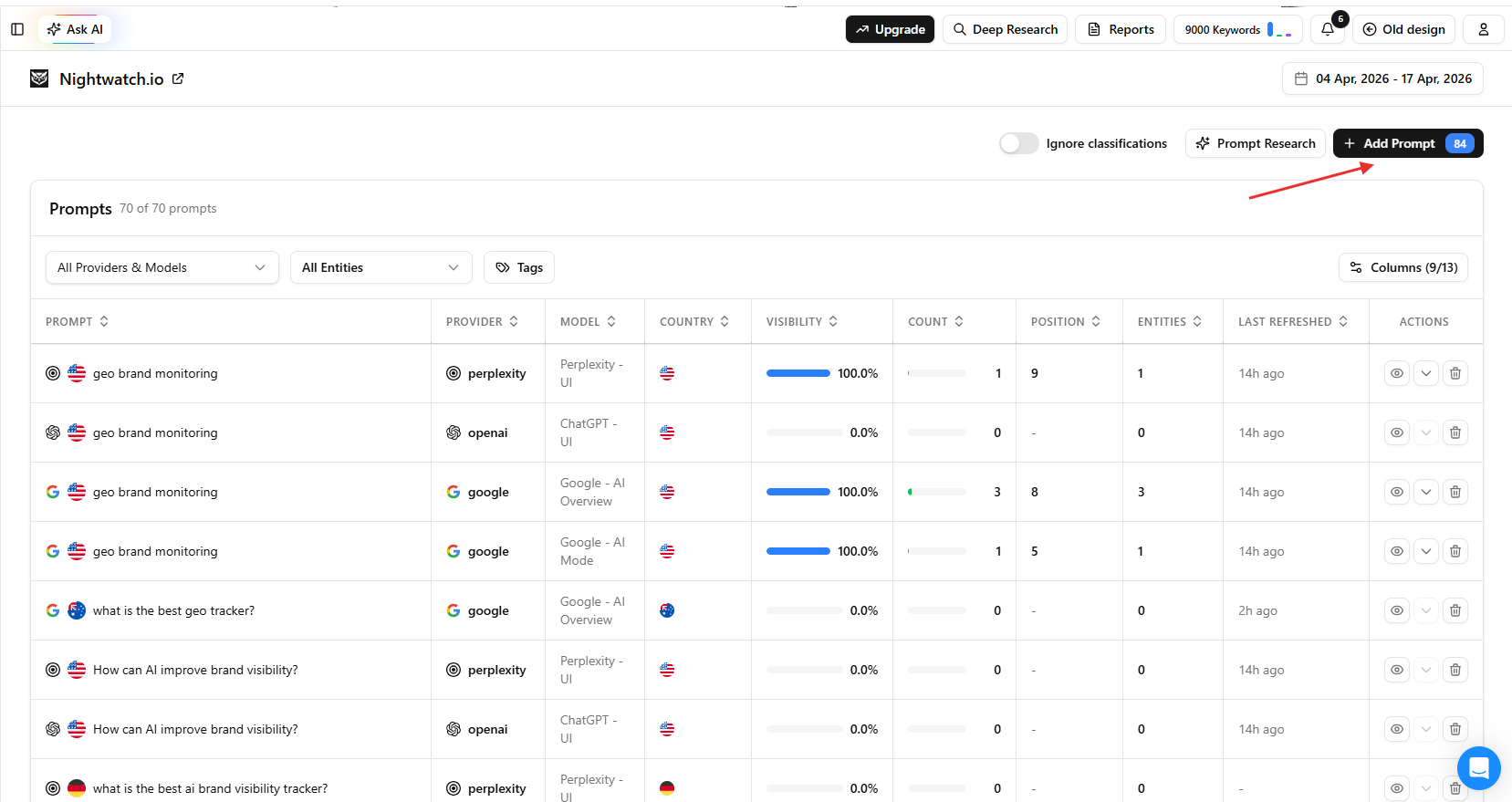

Nightwatch’s AI & LLM Tracker monitors brand visibility across ChatGPT, Perplexity, Google AI Mode, and AI Overviews in a single dashboard. Here’s how to set it up:

-

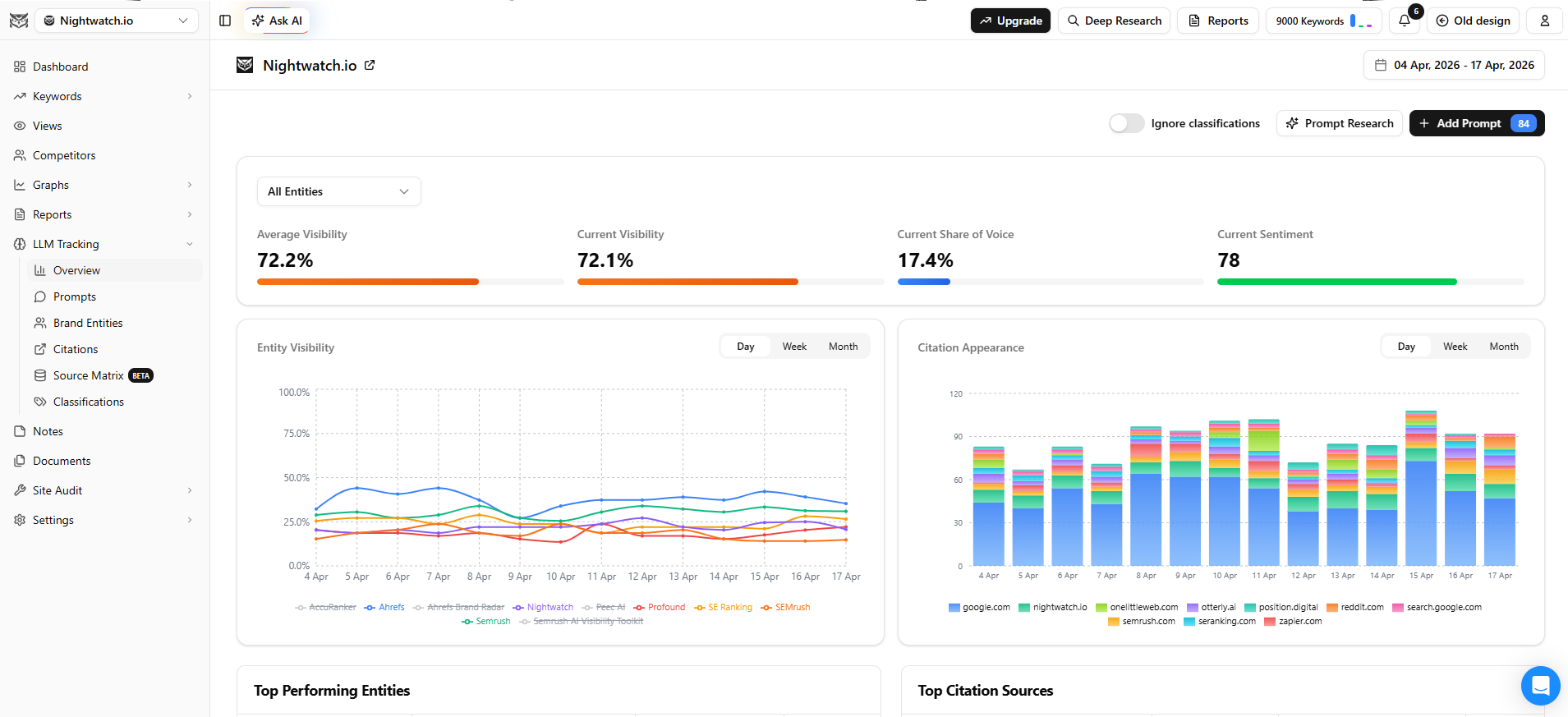

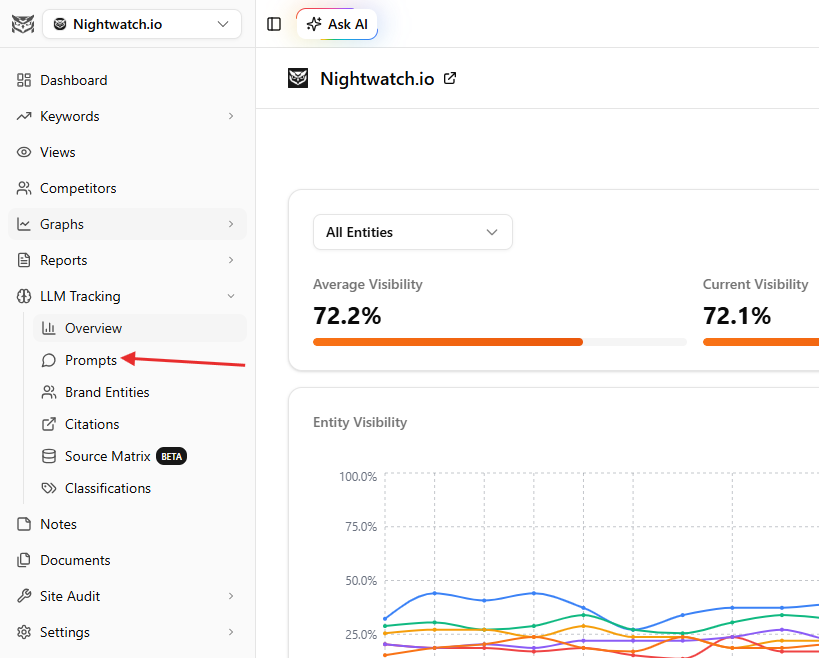

Step 1: Open LLM tracking and review the overview dashboard. Inside your Nightwatch account, open your website and navigate to the LLM Tracking section.

The overview dashboard shows your most important metrics at a glance: average visibility, share of voice, sentiment, entity visibility, brand performance over time, and domain distribution across citations.

Below that, you’ll see top-performing entities and citations broken down by impact. -

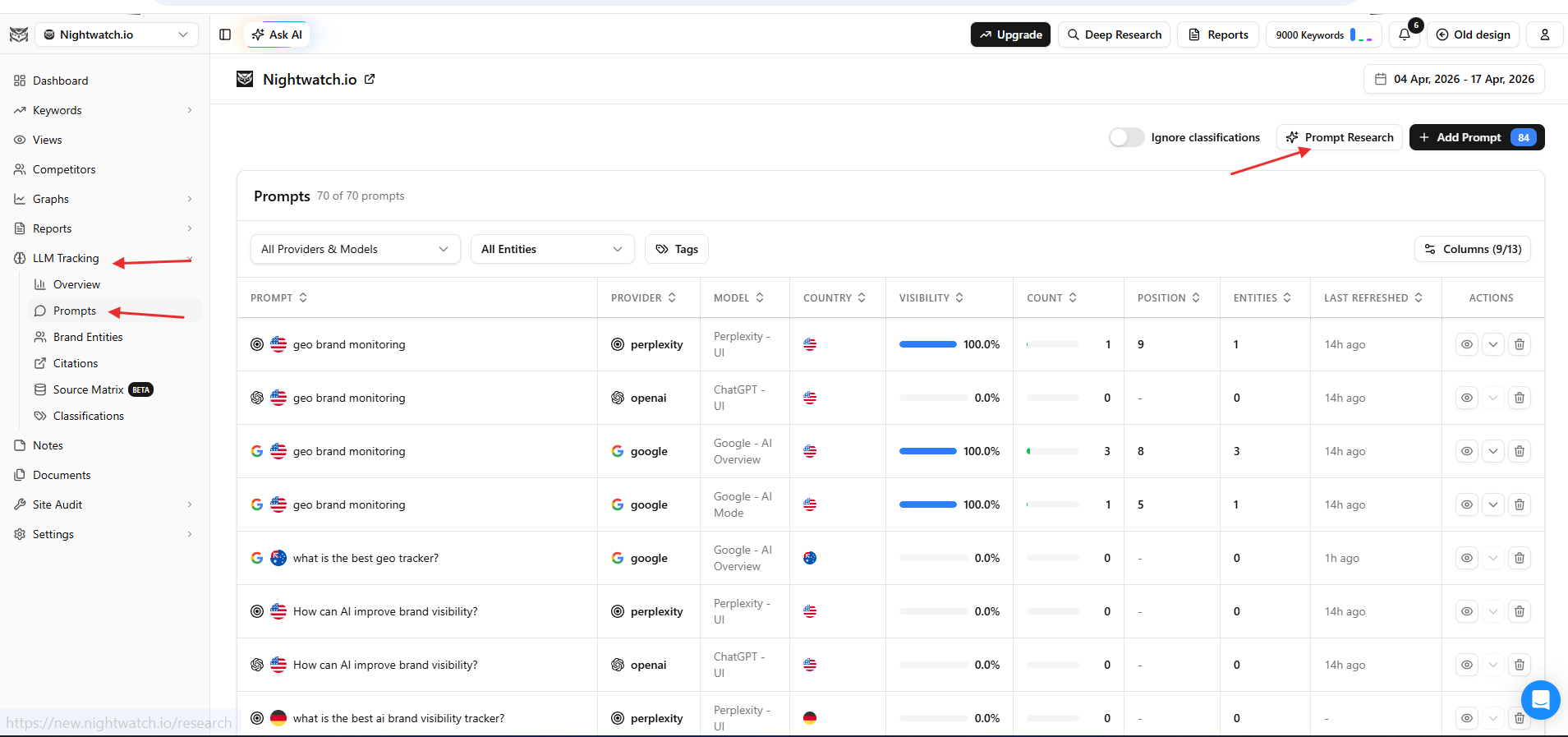

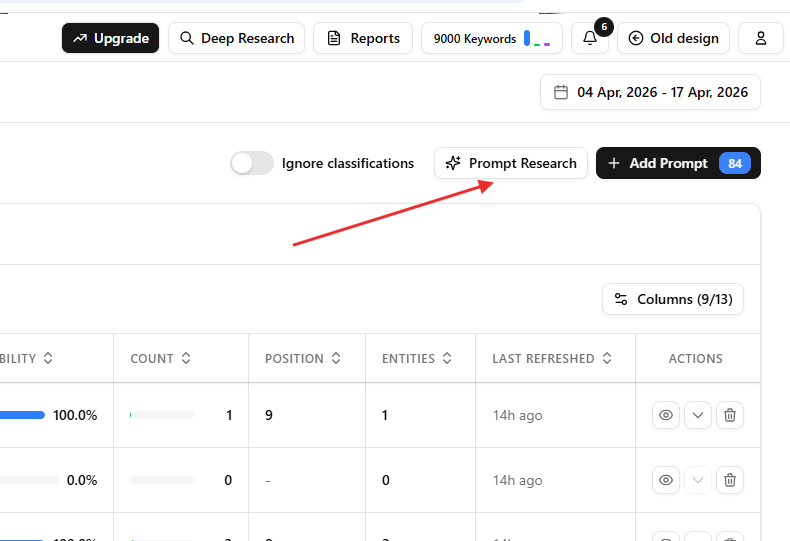

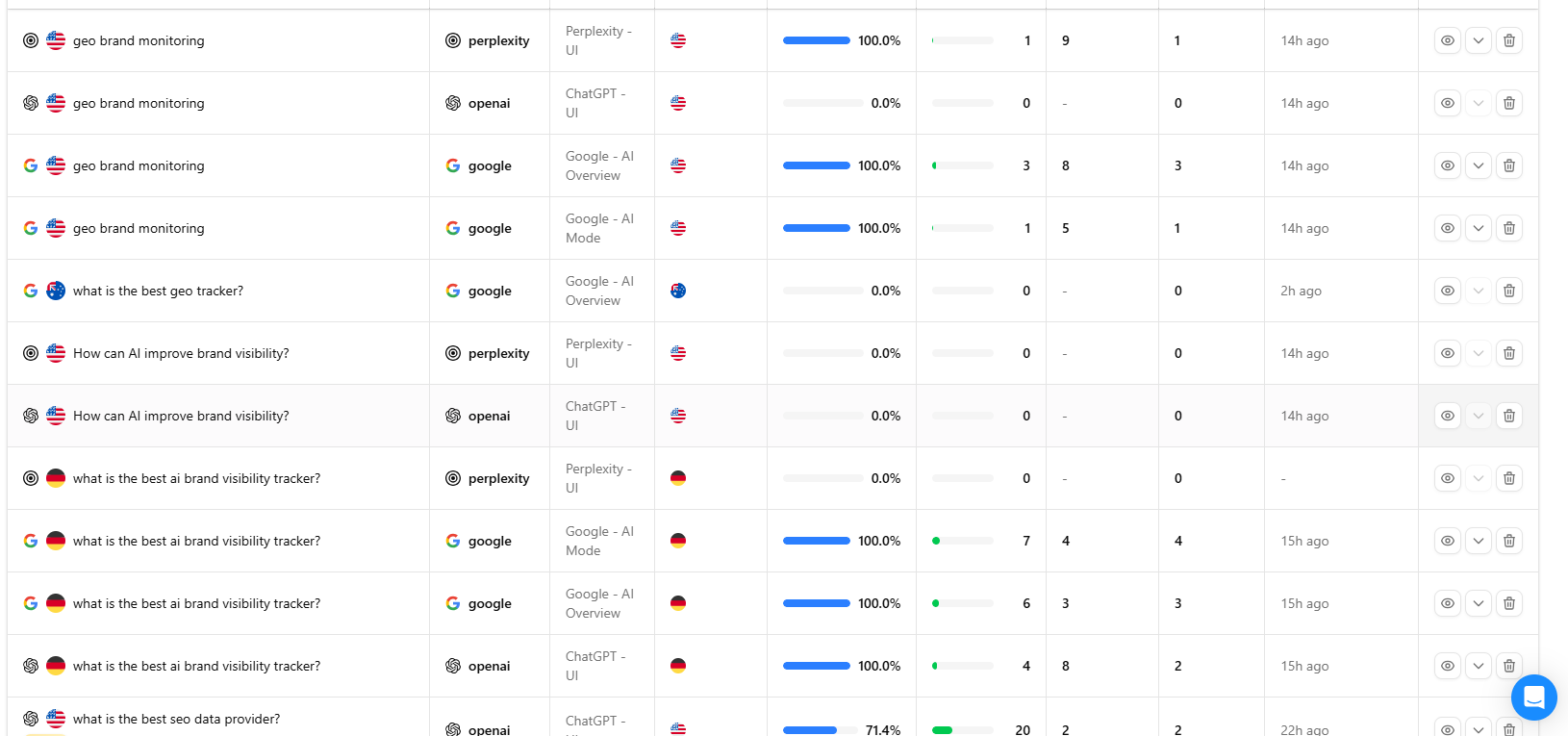

Step 2: Configure your prompts. Go to the Prompts section to set up what you’re tracking.

Click “Add Prompt”, enter the questions you want to monitor, then select the AI providers and location filters you need.

If you’re not sure which prompts to track, use Prompt Research inside Nightwatch. It runs through an agentic flow to generate relevant prompts for your category automatically.

-

Step 3: Analyze prompt-level data. Once data starts coming in, open any individual prompt to see position rankings, sentiment scores, and the full AI-generated response (click the eye icon to read it).

You can also rewind to historical responses to track how your brand’s presence in that prompt has changed over time. -

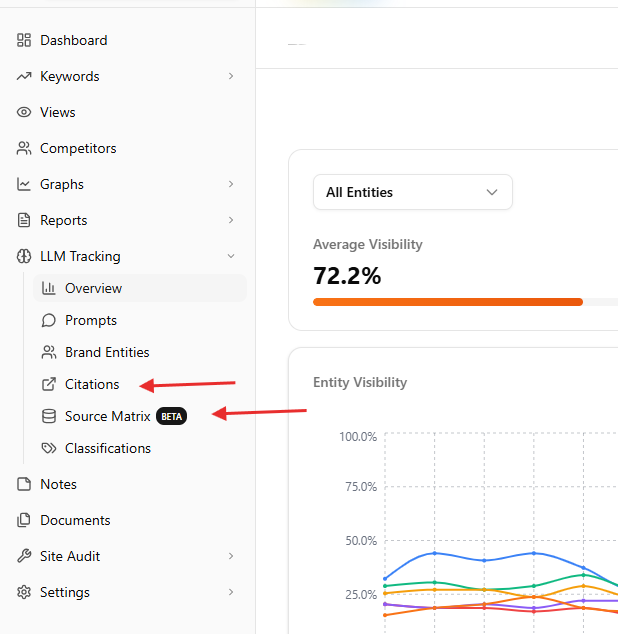

Step 4: Go deeper with Citations and Source Matrix. For a more detailed view, use the Citation Analysis feature, which applies Nightwatch’s AI to break down specific citation situations. The Source Matrix functionality gives you a broader overview of how mentions and sentiment are distributed across all the websites your crawler is monitoring.

The Citations view aggregates this by domain, and you can drill down to specific pages to understand how much influence a particular source has on how your brand appears in AI responses, and what kind of content you should be producing to shift it.

For a broader comparison of tools in this category, Nightwatch’s roundup of AI rank and brand tracking tools covers what’s available and what each one measures. You can also explore LLM tracking tools and the best AI search monitoring tools for additional context.

Frequently asked questions

Does optimizing for AI citations hurt traditional SEO?

No. Most of what drives AI citations: original content, third-party credibility, strong entity presence, and structured comparison pages, also support traditional search rankings. The tactics reinforce each other. The main difference is that AI citation work puts more weight on off-site presence and content format, whereas traditional SEO leans harder on technical signals and backlink authority.

How long does it take to appear in AI responses?

There’s no reliable answer, and anyone claiming otherwise is guessing. LLMs update their training data on different schedules, and retrieval-augmented models like Perplexity pull from live web content, so timing varies significantly. Some brands report appearing in AI responses within weeks of publishing high-quality third-party content. Others take longer. Consistent publishing across the right surfaces is the only controllable variable.

Can small brands compete with established names for AI citations?

Yes, more than in traditional SEO. LLMs don’t exclusively favor domain authority. They favor relevance and specificity. A small brand that’s consistently cited in niche subreddits, has detailed G2 reviews, and publishes focused LinkedIn articles on a specific use case can outperform a larger brand that’s generic across channels.

What’s the difference between a citation and a mention in AI responses?

A citation means the AI response links to or explicitly attributes a specific source. A mention means your brand name appears in the response without a direct source attribution. Both matter, but citations carry more weight. They signal that an AI platform treats your content as a reference, not just a data point. Nightwatch’s AI Tracker distinguishes between direct recommendations, comparisons, and mentions, so you can see the quality of your brand’s presence, not just its frequency.

Building brand citations in AI search takes time, but it’s trackable

AI search visibility and traditional search visibility are increasingly connected. The brands getting cited in ChatGPT and Perplexity tend to have strong third-party credibility, consistent off-site presence, and content structured around the questions AI is actually answering. Building that presence is a content and distribution strategy, not a technical one.

The tracking piece matters just as much. Off-domain citation work is hard to evaluate without data. You need to know which prompts you’re appearing in, where your competitors are outranking you, and whether the content you’re publishing is shifting your AI Visibility Score over time.

Start tracking your brand’s AI citation visibility with Nightwatch and monitor Share of Voice across ChatGPT, Perplexity, and Google AI Overviews from one dashboard.