How to Build a GEO Content Strategy From Scratch

Quick Takeaways

- GEO starts with prompts, not keywords. Map how your audience questions AI models before writing anything

- On-domain content (blogs, comparison hubs, landing pages) is still the foundation. 57% of B2B LLM citations come from blogs and listicles

- Off-domain presence (Reddit, YouTube, case studies) is where a growing share of AI citations originate

- Content structure matters as much as authority. Chunk answers in 40–80 words and front-load your responses

- Tracking AI visibility is non-negotiable. You can’t refine a strategy you can’t measure

Introduction

You’ve been doing SEO well. Your rankings are solid. Then you search your category in ChatGPT or Perplexity, and your brand isn’t in the response. A competitor you’ve been outranking for years is getting recommended instead.

This is the central problem with applying a traditional SEO playbook to generative engine optimization. The mechanics are different. Keyword rankings, title tags, and backlink counts still matter, but they’re not sufficient anymore. AI models pull citations from a broader set of signals: content structure, brand authority across platforms, community presence, and whether your content directly answers the questions people are actually posing to AI.

This guide walks through how to build a GEO content strategy from scratch: how to identify the right prompts to target, how to build content AI models want to cite, how to extend your footprint off your own domain, and how to track whether any of it is working.

Start with prompts, not keywords

Traditional SEO starts with a keyword list. GEO starts with a prompt map.

The distinction matters because AI models don’t process queries the way search engines do. Users ask conversational, multi-part questions: “What’s the best project management tool for a remote team under 20 people?” rather than “best project management software.” Your content strategy needs to match that query shape, not just the surface-level keyword.

How to map the prompts your audience actually uses

A prompt map is a list of the full-sentence questions your target audience is likely to ask AI models at each stage of their decision-making process. Think across the funnel: discovery questions (“what’s the difference between X and Y?”), evaluation questions (“which tool is better for [specific use case]?”), and validation questions (“is [your brand] worth it?”).

Good sources for prompt research include customer support threads, sales call notes, community forums like Reddit, and your own search console data filtered for question-format queries. The goal is to get inside the actual language your audience uses when they don’t have to fit their query into a keyword box.

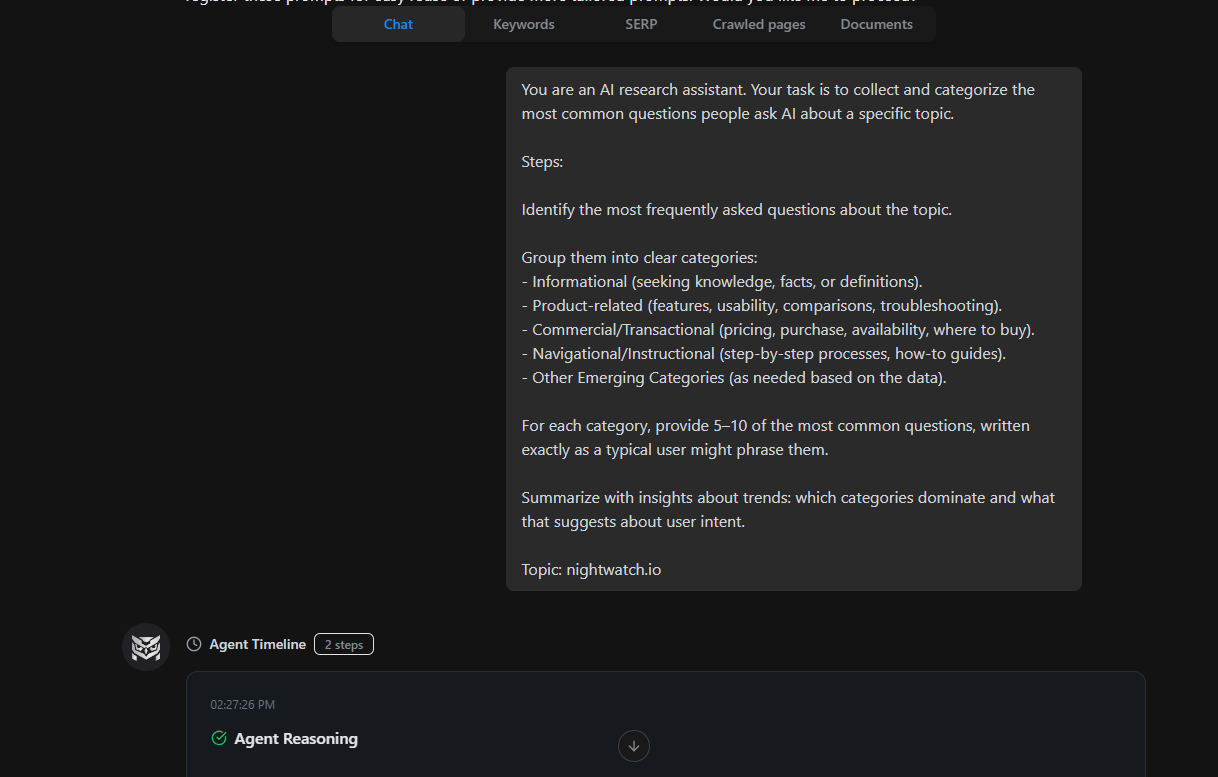

To speed this up, head to NightOwl and ask the AI agent to collect and categorize the most common questions people ask AI about a specific topic.

Here’s a sample prompt you can use:

You are an AI research assistant. Your task is to collect and categorize the most common questions people ask AI about a specific topic.

Steps:

- Identify the most frequently asked questions about the topic.

- Group them into clear categories:

- Informational (seeking knowledge, facts, or definitions)

- Product-related (features, usability, comparisons, troubleshooting)

- Commercial/Transactional (pricing, purchase, availability, where to buy)

- Navigational/Instructional (step-by-step processes, how-to guides)

- Other Emerging Categories (as needed based on the data)

- For each category, provide 5–10 of the most common questions, written exactly as a typical user might phrase them.

- Summarize with insights about trends: which categories dominate and what that suggests about user intent.

Topic: (Your chosen topic)\

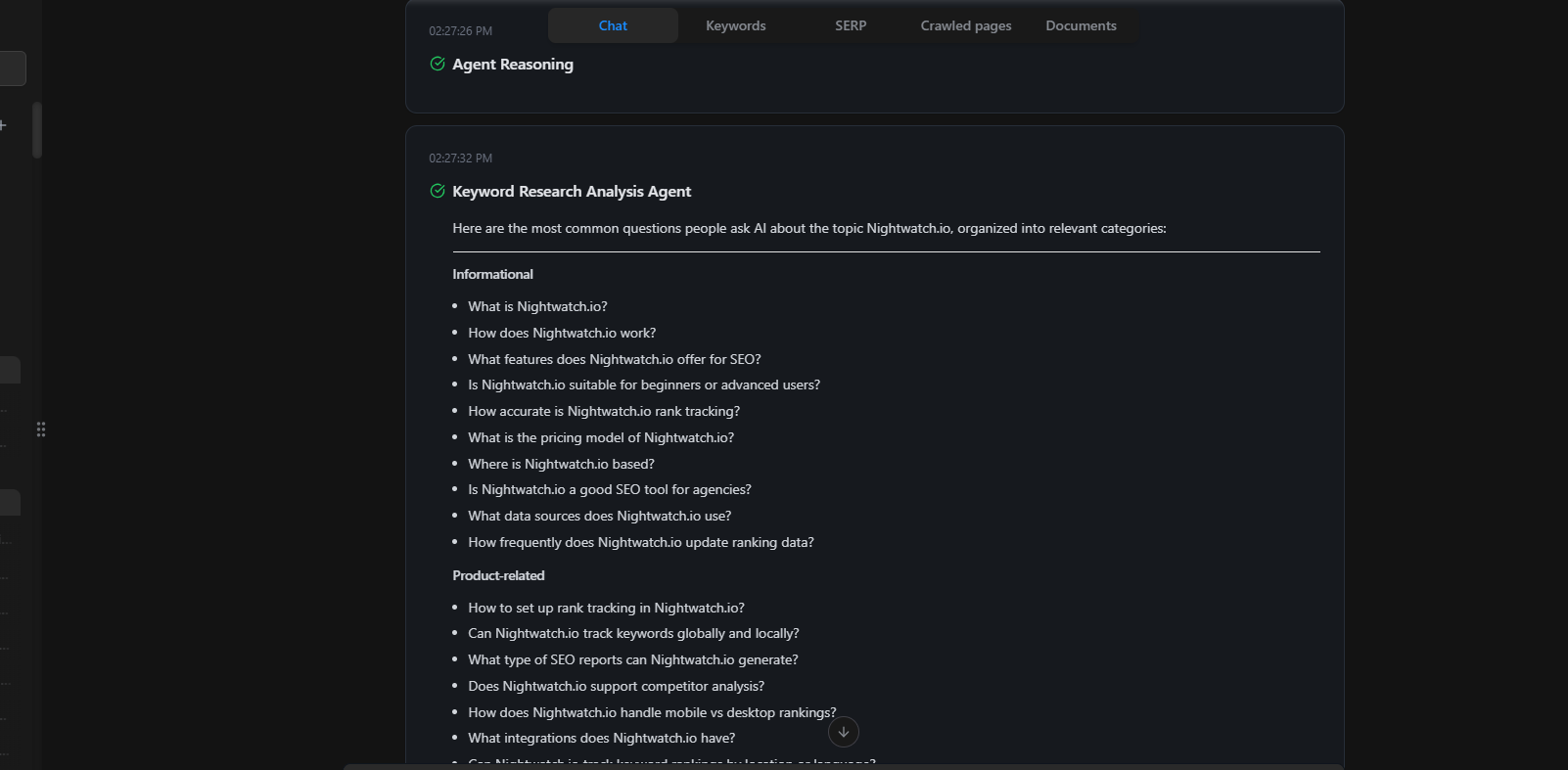

The AI will instantly auto-generate a set of relevant prompts your audience is likely querying across AI models. It also cross-references those prompts with how your brand currently appears in AI responses, so you can see gaps between where you’re showing up and where you should be.

This is also a good place to practice AI keyword research with intent-first thinking rather than volume-first.

Turning prompt maps into content priorities

Once you have 30–50 prompts, group them by topic cluster and buying stage. The clusters where you have strong on-domain content but weak AI visibility are your first-priority targets. The clusters where you have neither are your longer-term build.

Prompts where your competitors are getting cited and you’re not are particularly useful. They tell you that AI models have decided this is a category where authoritative answers exist, and your content just isn’t structured to compete for them yet.

Build your on-domain content foundation

Why comparison hubs and listicles get cited most

AI models are trying to give users the most direct, credible answer possible. Comparison content and listicles do two things well: they cover a topic with enough breadth to serve as a reference point, and they’re structured in a way that’s easy to extract a specific answer from.

If you don’t have a comparison hub for your category, that’s the first content gap to fill. Think: “[Your category] tools compared,” “[Problem] solutions ranked by use case,” or “X vs Y: which is better for [specific context].” These pages become citation anchors for a wide range of prompts.

How to structure content AI models want to reference

The structural principles that make content AI-citable are well-documented at this point.

- Front-load the answer. Don’t bury the response in three paragraphs of context. Put the direct answer in the first 1–2 sentences, then support it.

- Use 40–80 word answer chunks. Each section should be self-contained enough to be extracted and cited independently. If a section requires reading the previous one to make sense, it won’t get pulled into an AI response.

- Semantic triples. Structure claims as subject-verb-object: “[Brand] does [thing] for [outcome].” This matches how AI models parse and attribute information.

- Entity pages per feature or category. If you have multiple products, use cases, or audience segments, each should have its own dedicated page rather than being consolidated into one long overview.

Run a content gap analysis against your prompt map. You’ll likely find clusters where you have content, but it’s not structured for AI citation, and clusters where you have no content at all.

The role of original data and proprietary perspective

Generic aggregation doesn’t get cited. AI models have access to hundreds of generic posts covering the same ground; giving them a reason to cite yours specifically means offering something they can’t get elsewhere.

Original data is the most reliable way to do this. Surveys, internal product analytics, customer research, or even a well-documented case study from a single client give you citable facts that don’t exist anywhere else. A proprietary point of view, consistently applied across your content, builds the same kind of differentiation over time.

| Content type | AI citation likelihood | Why |

|---|---|---|

| Comparison hubs / listicles | High | Structured for extraction; broad prompt coverage |

| Original research / surveys | High | Unique data AI can’t find elsewhere |

| Landing pages (feature-specific) | Medium-high | Directly answers evaluation prompts |

| Entity pages (per use case) | Medium-high | Precise match to specific prompts |

| Generic blog posts | Low | Easily substituted with other sources |

| Category overviews (no distinct POV) | Low | No reason to prefer over competitors |

Is your brand point of view doing enough work?

The sites AI models cite most consistently have something in common: they take a clear position. Not on everything, but on the questions their audience actually cares about.

If your content reads like a neutral overview “some companies prefer X, others prefer Y, it depends on your situation.” It’s easy to pass over. AI models are synthesizing multiple sources; they’re looking for content that contributes a distinct perspective to the synthesis, not content that mirrors what’s already in the synthesis.

What a brand POV looks like in practice

A brand point of view doesn’t require being contrarian for its own sake. It means your content reflects a consistent set of beliefs about how your category works, what matters and what doesn’t, and why your approach is the right one for your audience.

Practically, this looks like: opinionated guides (“here’s why we think [approach X] is wrong for [context]”), data-backed arguments (“our analysis of 500 customer accounts shows that [finding]”), and firm recommendations rather than hedged “it depends” conclusions.

A brand point of view, a proprietary data flywheel, and a source-diversification plan are the three pillars of a citation-worthy content strategy — and they reinforce each other. Your POV gives you something worth citing; your proprietary data makes it credible; distributing it across multiple source types makes it discoverable.

How proprietary data creates a citation flywheel

When you publish original findings, other writers reference them. Those references create additional citations across domains. AI models, trained on a broad corpus of web content, then encounter your data in multiple contexts and treat it as authoritative.

This isn’t an overnight process. But it’s a compounding one. A single original research piece, syndicated and referenced over 12 months, builds more GEO authority than 20 generic posts built on the same publicly available information.

Off-domain: where AI citations actually come from

There’s a persistent misconception that GEO is purely an on-domain content problem. It’s not.

AI models pull citations from wherever authoritative, relevant content exists — not just your website. A well-structured answer in a high-engagement Reddit thread, a YouTube comment, a forum post: these are all fair game, and none of them require backlinks, domain authority, or any traditional SEO work to get picked up.

This matters for how you allocate effort. Off-domain presence isn’t a nice-to-have layer on top of your content strategy. For many citation types, it’s the primary driver.

Reddit, YouTube, and community content as citation seeds

Community platforms are, from an AI training and retrieval perspective, high-signal environments. Responses that get upvoted, saved, or referenced in follow-up threads carry social proof that AI models pick up on.

Ethan Smith, CEO of Graphite, shared on Lenny’s Podcast that ChatGPT traffic converts 6x better than Google search, with Webflow’s data as the proof point. The three off-domain content types driving that traffic were landing pages, YouTube videos, and Reddit comments.

A single YouTube video that directly answers a high-intent customer question can keep working long after it’s published, and a well-timed post in an industry subreddit can tap into an audience that’s both targeted and highly engaged. Neither requires a massive budget or production team, yet both can generate outsized impact, far beyond what their creation cost might suggest.

Content syndication and case studies

Case studies are particularly powerful for GEO. They’re specific, verifiable, and hard to replicate: exactly the properties that make content worth citing. A case study showing how a customer solved a specific problem with your product answers evaluation prompts directly, in a format AI models find easy to extract from.

Syndicating your best content (or publishing original versions) on platforms like LinkedIn, industry newsletters, and niche publications builds your content distribution footprint across domains. When AI models encounter your positioning across five different sources rather than one, it registers as broader authority.

Why PR is now a GEO input, not just brand comms

PR is now a core GEO input, not just brand comms. Earned media, like press coverage, journalist articles, and industry write-ups, are becoming the backbone of GEO visibility, because many of the signals that influence what an AI cites don’t come from your own website at all. They come from credible third-party sources.

A mention in a trade publication, a quoted expert appearance in an industry roundup, or a partnership announcement covered by a respected outlet all create citation-eligible references across authoritative domains. These references get picked up by AI models in ways that pure link-building often doesn’t.

PR isn’t a replacement for content strategy. But if your GEO plan doesn’t include a plan for third-party coverage, you’re leaving a meaningful share of the citation pool uncovered.

What does a good GEO content calendar look like?

A GEO content calendar maps content types to citation channels, not just keywords to publish dates.

The distinction matters because the sequencing logic is different. You build citation authority in layers: on-domain foundation first, then off-domain amplification, then measurement-driven iteration. Publishing 10 comparison posts simultaneously before you’ve established any off-domain presence is less effective than publishing three, distributing them deliberately, and tracking what gets cited.

Balancing new content with refreshing existing assets

A common mistake when building a GEO strategy is treating it as a net-new content project. Most sites already have content that could perform well in AI citations with structural changes: front-loading answers, adding entity pages for major product features, or updating posts that contain original research but haven’t been refreshed in 18 months.

Refreshing existing assets with GEO-oriented structure often produces faster results than new content because the domain authority is already there. Audit your highest-traffic posts first. Look for ones that answer high-volume prompts but bury the answer, lack structured answer chunks, or don’t include original data or a clear POV.

Sequencing your first six weeks

A practical starting point for a new GEO content strategy:

- Weeks 1–2: Audit and prompt mapping. Run a prompt research session in NightOwl to generate your target prompt set. Audit existing content against those prompts to identify what can be restructured vs. what needs to be built.

- Weeks 3–4: On-domain foundation. Prioritize your comparison hub and your two or three highest-value entity pages. Restructure the top existing posts identified in the audit.

- Weeks 5–6: Off-domain seeding. Publish syndicated versions of your best original content on LinkedIn and relevant industry platforms. Identify two or three community spaces where your audience asks questions AI models will reference.

From week seven onward, you’re in iteration mode: tracking AI visibility, identifying new citation gaps, and building content to fill them.

How do you measure whether your GEO strategy is working?

This is where a lot of GEO strategies fall apart. There’s no shortage of frameworks and tactical advice, but measurement is what separates a real strategy from a content publishing exercise. Scepticism from the SEO community around GEO advice is largely warranted precisely because so little of it addresses attribution clearly.

The metrics that matter are different from traditional SEO. Rankings and traffic are downstream; AI visibility is the leading indicator.

The metrics that matter

- AI Visibility Score: The percentage of relevant prompts where your brand appears in an AI-generated response. This is your headline GEO metric. A rising score means your content strategy is working; a flat or declining score against a growing prompt set means you’re not keeping pace.

- Share of Voice: Your brand mentions relative to competitors across the AI responses you’re tracking. This tells you whether you’re gaining ground in competitive citation sets or losing it.

- Average Position: Where in an AI response your brand typically appears. Position 1 (cited first or recommended first) carries more weight than position 4 or 5. Track this over time against specific prompt clusters.

- Citations Dashboard: Which domains AI models reference when discussing your category. This tells you which off-domain placements are actually translating into citations, so you can double down on the channels that are working.

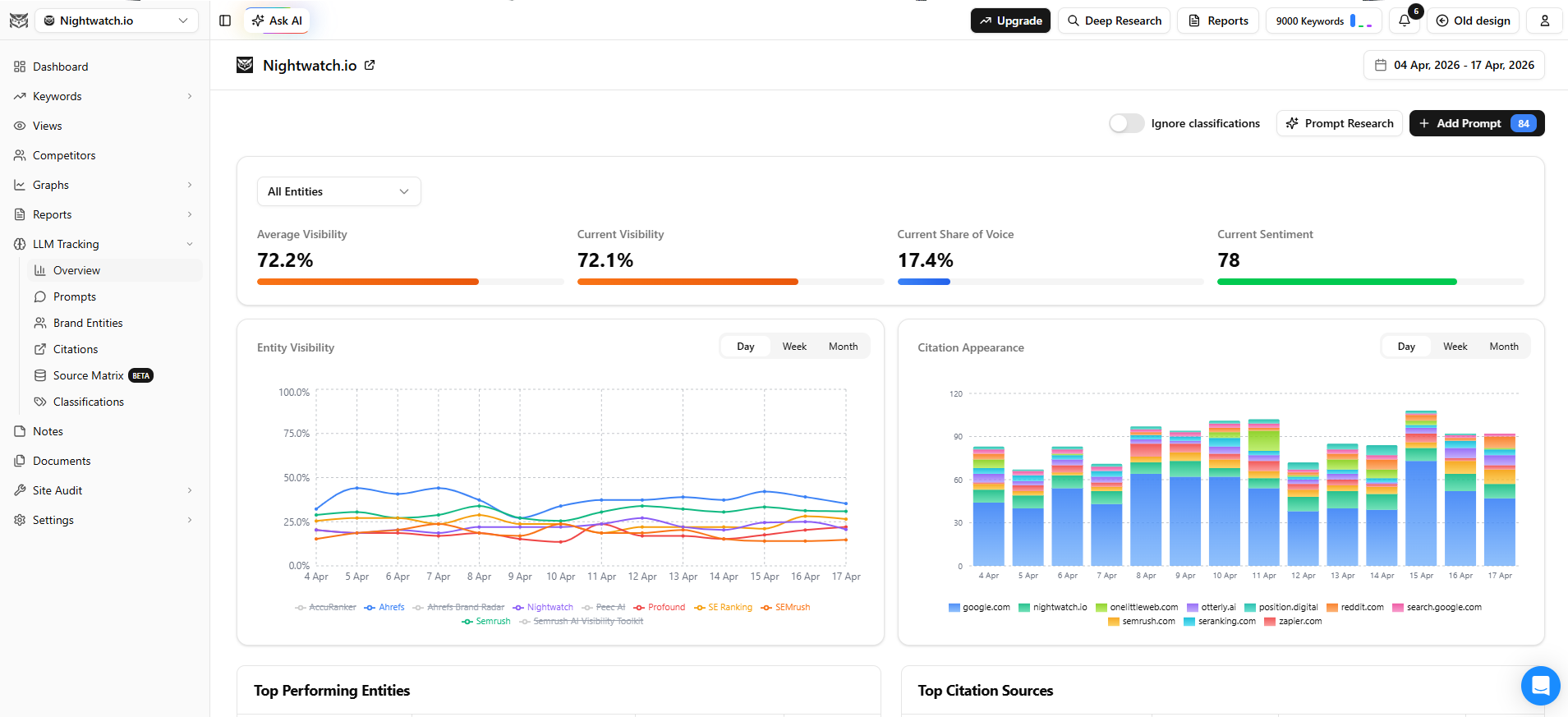

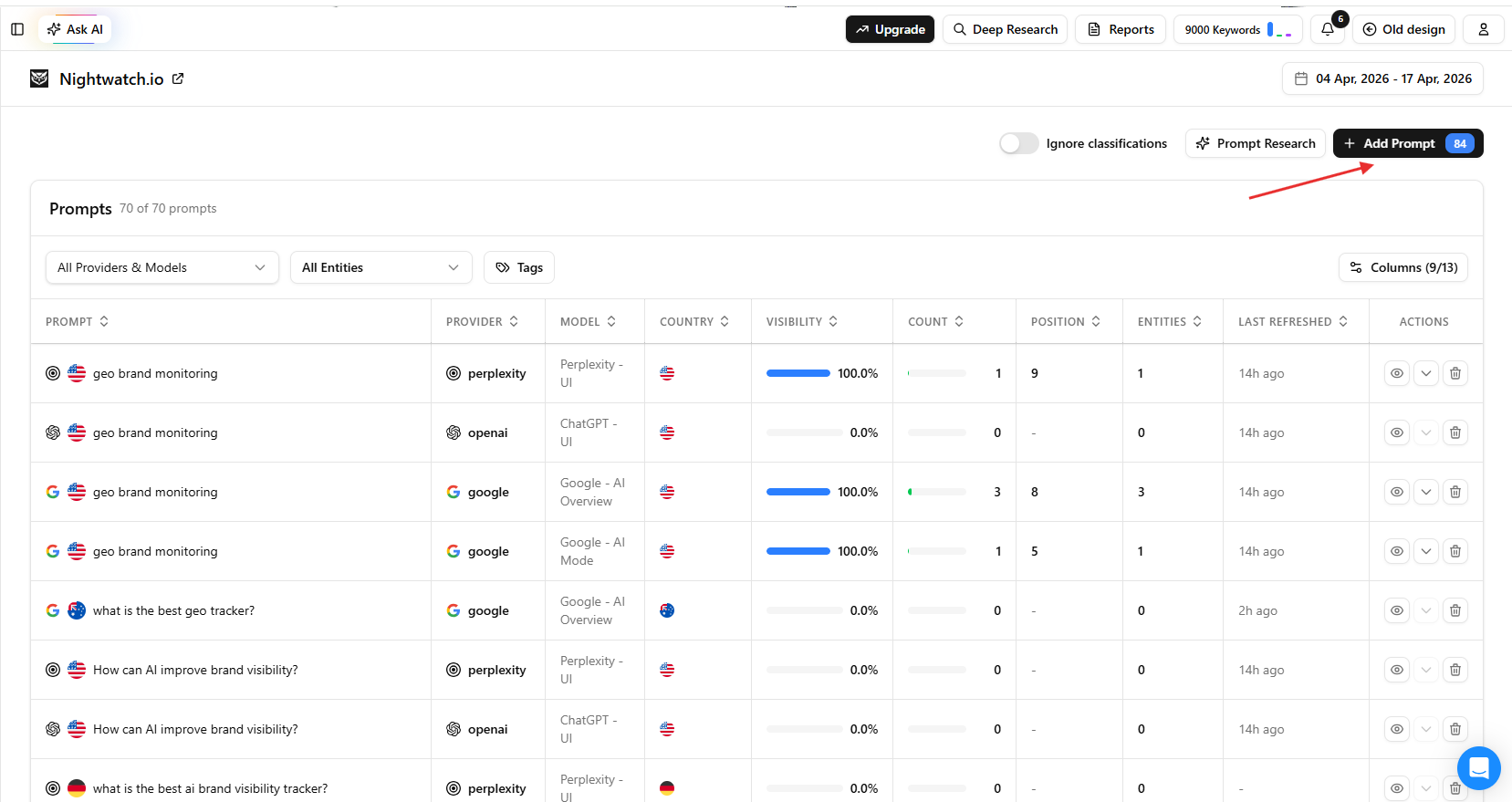

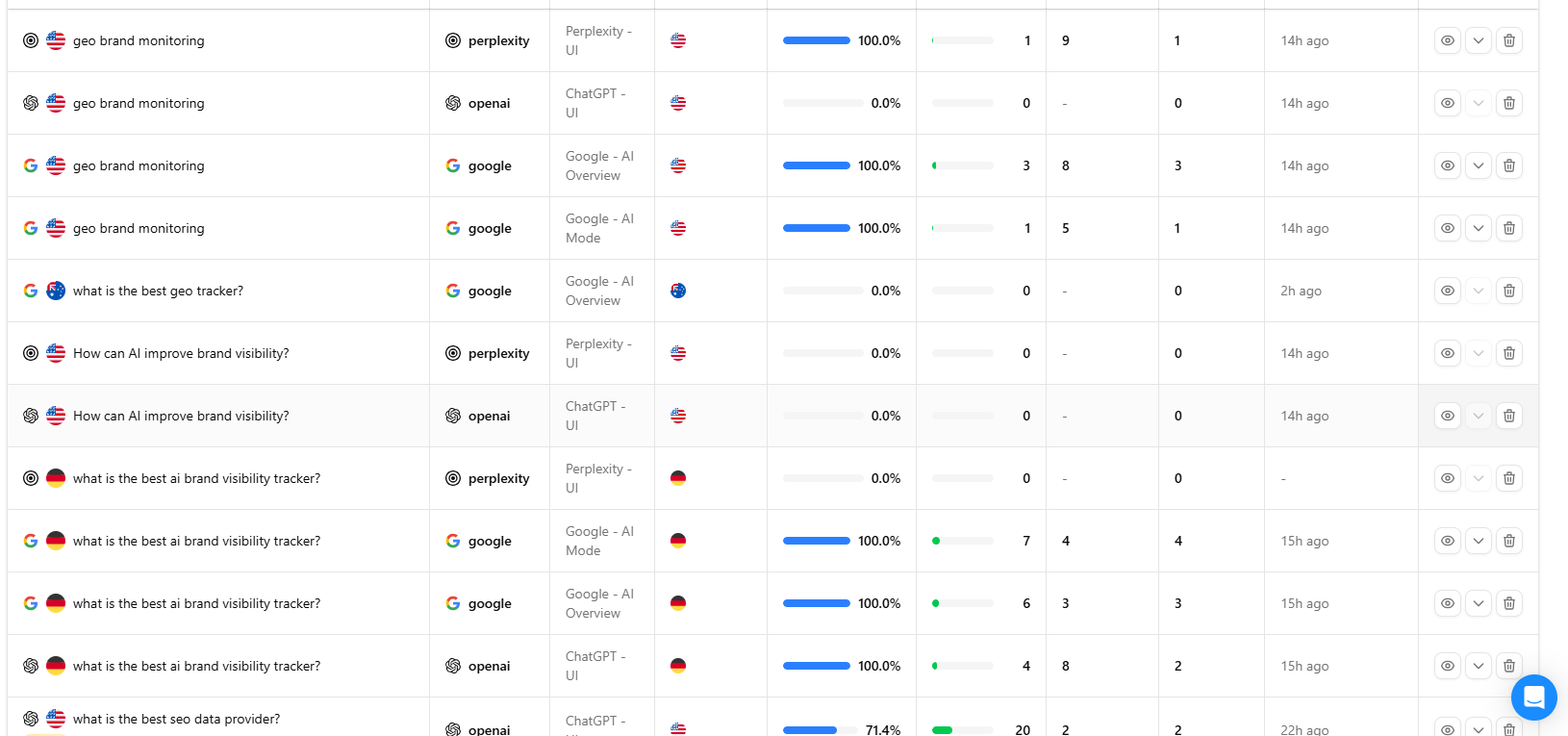

Tracking GEO performance in Nightwatch

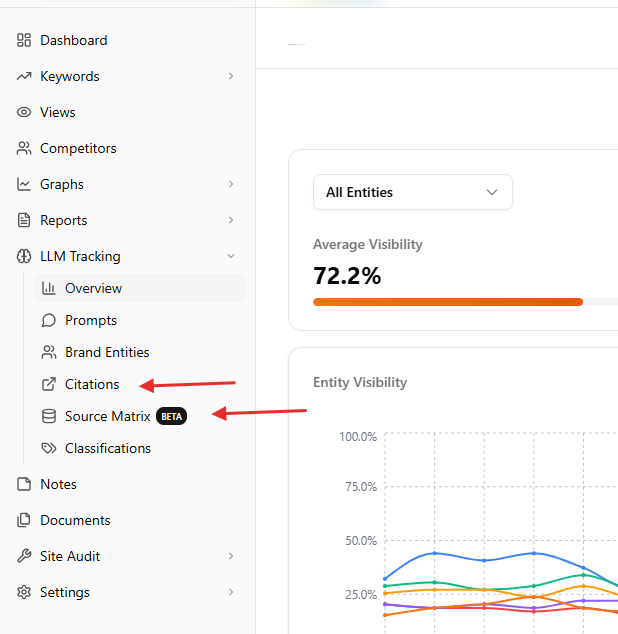

Nightwatch’s LLM tracking tools unify all of these metrics in a single dashboard.

-

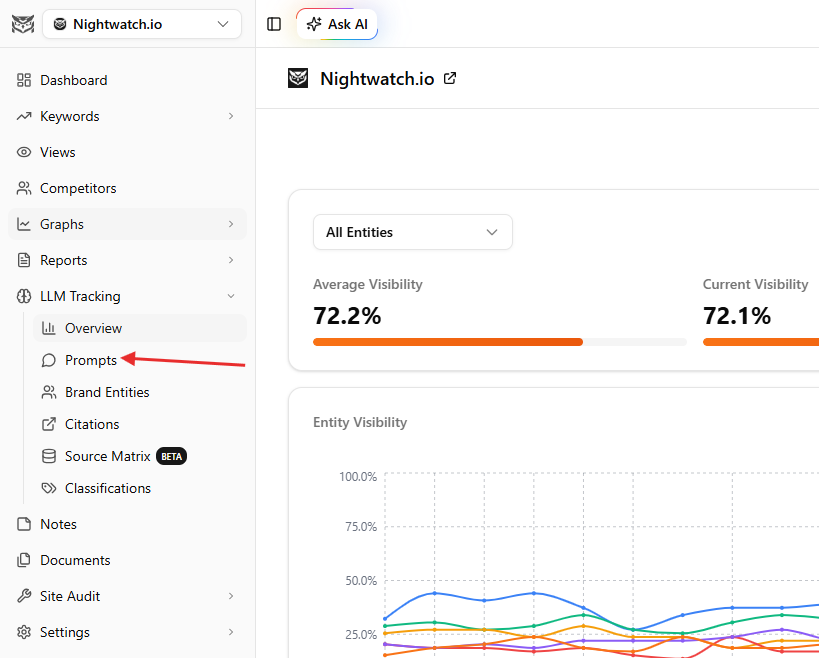

Step 1: Open your Nightwatch dashboard and select your website from the client list on the left. Navigate to the LLM Tracking section

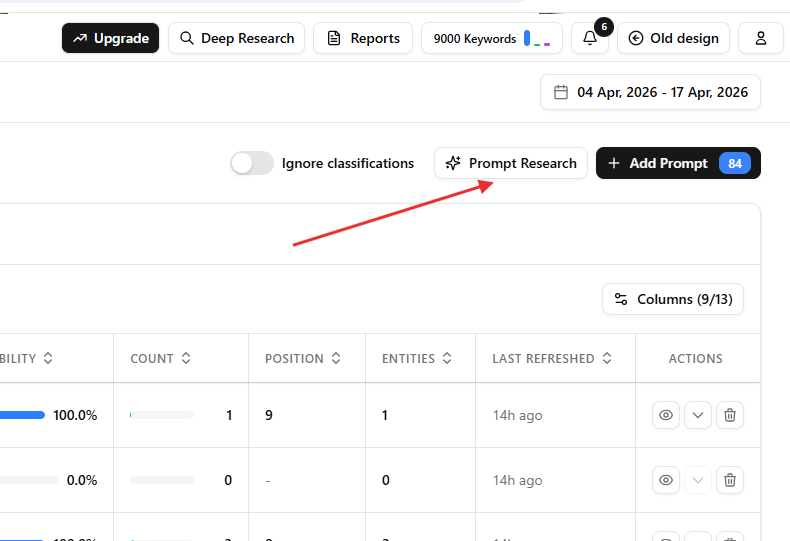

Then go to the Prompts tab.

Click “Add Prompt” to import your prompt set from the mapping exercise. Enter each prompt, then choose the AI providers and location filters you want to track.

If you’re not sure which prompts to start with, click “Prompt Research” to generate a relevant set through NightOwl’s agentic workflow.

-

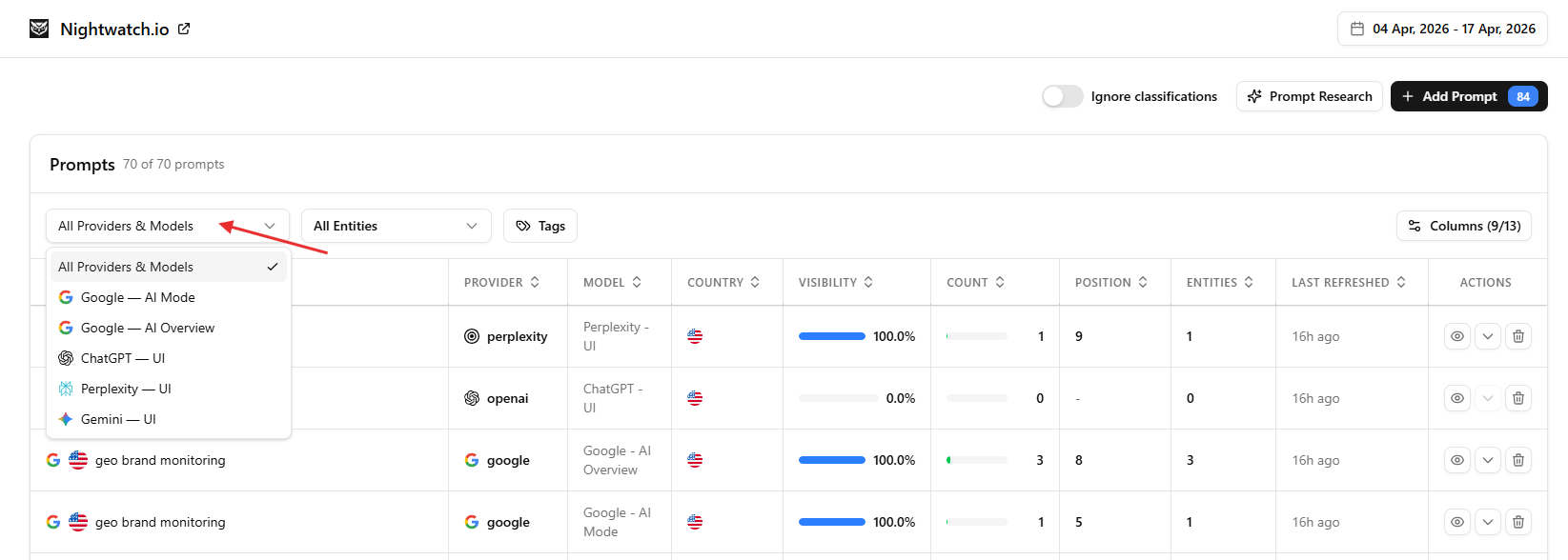

Step 2: Set your tracked AI models. Nightwatch monitors ChatGPT, Perplexity, Google AI Mode, and AI Overview by default.

-

Step 3: Once data starts collecting, head back to Overview for your first visibility audit. You’ll see average visibility, Share of Voice vs. competitors, sentiment, entity visibility, and how brand performance is trending across AI responses.

To go deeper on any individual prompt, open it from the Prompts table to see position, sentiment, and the full AI response via the eye icon.

-

Step 4: Review the Citations Dashboard to see which domains AI models are referencing when they mention your category. For a broader view of how mentions and sentiment are distributed across the web, use Source Matrix.

This shows which external pages are influencing how your brand appears in AI responses. Cross-reference both with your off-domain content plan to identify where you have presence gaps.

Connecting AI citation trends back to search traffic

AI visibility and search visibility are not separate problems. When AI models recommend your brand, users frequently then search for you directly or by name.

Most teams are tracking GEO and traditional SEO in separate tools, which means they’re missing the relationship between the two.

Nightwatch tracks both in one dashboard. Your rank tracking data sits alongside your LLM visibility metrics, so when your AI Visibility Score rises, you can immediately check whether branded search volume and keyword rankings are moving in the same direction. When they are, you have attribution. When they aren’t, you have a signal that your GEO content is getting cited but not yet driving the follow-through you need.

Tracking both in the same place is what makes the relationship visible.

FAQs

How long does it take to see results from a GEO strategy?

Early signals typically appear within 4–8 weeks if you’re restructuring existing high-authority content and building off-domain presence simultaneously. AI models update their citation pools more frequently than people assume. That said, significant shifts in AI Visibility Score and Share of Voice usually take 3–6 months of consistent effort to become statistically meaningful.

Does GEO replace traditional SEO?

No. The traditional SEO vs AI SEO question gets framed as either/or, but the evidence doesn’t support that framing. Search and AI are increasingly interlinked: most AI-generated answers drive follow-up searches, and the on-domain content that performs well in traditional search still forms the majority of AI citation sources. GEO adds a layer on top of SEO; it doesn’t replace it.

What’s the difference between GEO and AEO?

The terms are often used interchangeably. Generative Engine Optimization (GEO) typically refers specifically to optimizing for AI-generated responses in tools like ChatGPT, Perplexity, and Google AI Mode. Answer Engine Optimization (AEO) is a broader term that sometimes includes featured snippets and voice search. In practice, the content and measurement approaches overlap significantly.

Can small sites compete for AI citations?

Yes, with caveats. Domain authority still matters, but it’s not the only factor. Small sites with highly specific original data, a clear POV, or strong community presence in niche forums have shown up in AI citations against much larger competitors. The off-domain approach, particularly authentic community participation and specific case studies, is more accessible to smaller sites than trying to outproduce established content operations.

Build your GEO content strategy and track every gain

Building a GEO content strategy isn’t a one-time project. It’s an ongoing cycle: map prompts, build and structure content, extend your footprint across domains, measure AI visibility, and iterate based on what’s actually getting cited.

The brands showing up consistently in AI responses in 2026 started this process 12 to 18 months ago. The brands that start now will be the ones showing up consistently in 2027.

Start tracking your AI visibility in Nightwatch from day one — so you know what’s working, not just what’s published.